I wanted a simple thing: when a package arrives at my door, play a sound effect through the nearest security camera’s speaker. What followed was a deep debugging session involving RTSP backchannels, AAC frame pacing, and spectrogram analysis. Here’s how I got it working.

The Setup

I run about 15 Dahua and Lorex IP cameras around my property, managed through Home Assistant with the Dahua custom integration (installed via HACS). Several cameras have built-in speakers, and the integration exposes them as media_player entities. The goal: trigger a “Hallelujah” sound effect on the camera that detects a package.

Problem 1: No Sound At All

The first attempt produced silence. The media_player.play_media service call completed without errors, but nothing came from the speaker. Time to investigate.

Checking the Hardware

First, verify the camera actually has a speaker:

curl -s --digest -u admin:PASSWORD

"http://CAMERA_IP/cgi-bin/devAudioOutput.cgi?action=getCollect"

# result=1 means speaker is presentSpeaker confirmed. Next, check if audio encoding is enabled on the camera—a prerequisite for the RTSP backchannel:

curl -s --digest -u admin:PASSWORD -g

"http://CAMERA_IP/cgi-bin/configManager.cgi?action=getConfig&name=Encode[0].MainFormat[0]"

| grep AudioEnableAudioEnable=false. That’s the problem. Without audio encoding enabled, the camera won’t advertise a backchannel audio track in its RTSP DESCRIBE response. No backchannel means no speaker output.

The Fix

curl -s --digest -u admin:PASSWORD -g

"http://CAMERA_IP/cgi-bin/configManager.cgi?action=setConfig

&Encode[0].MainFormat[0].AudioEnable=true

&Encode[0].ExtraFormat[0].AudioEnable=true"After enabling audio, the RTSP DESCRIBE response now includes a sendonly audio track (trackID=5), which is the ONVIF backchannel the integration uses to send audio to the speaker.

I added detection for this condition to the integration—it now logs a warning at startup if audio encoding is disabled, and provides an enable_audio service on the media player entity to fix it without manual curl commands.

Problem 2: Audio Plays, But Sounds Terrible

With audio encoding enabled, sound came out of the speaker—but it was a garbled mess, compressed into a brief burst. To diagnose this properly, I needed data, not just ears.

Spectrogram-Based Debugging

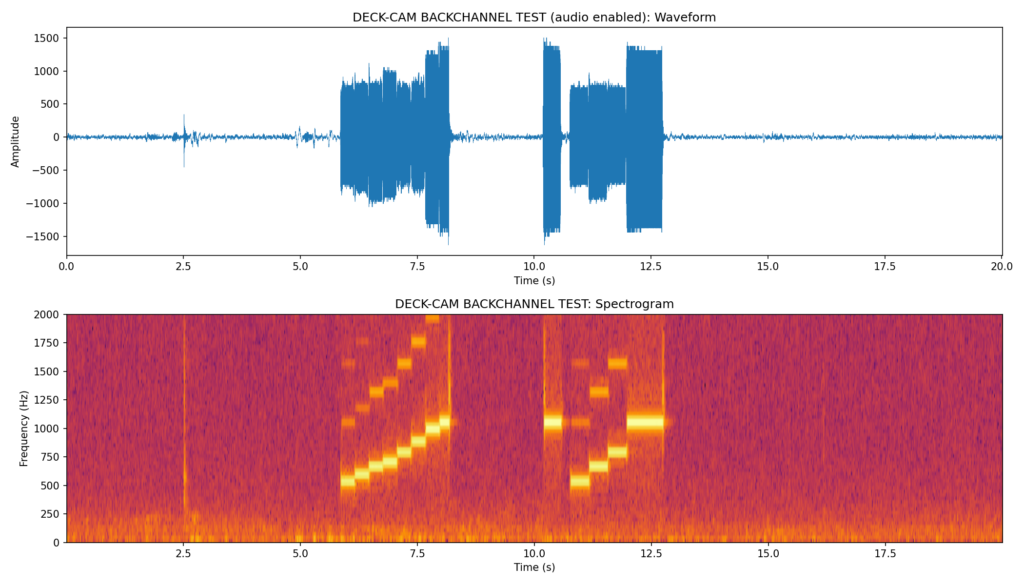

I set up a recording pipeline: play audio on one camera’s speaker while recording from a nearby camera’s microphone, then generate spectrograms for visual comparison.

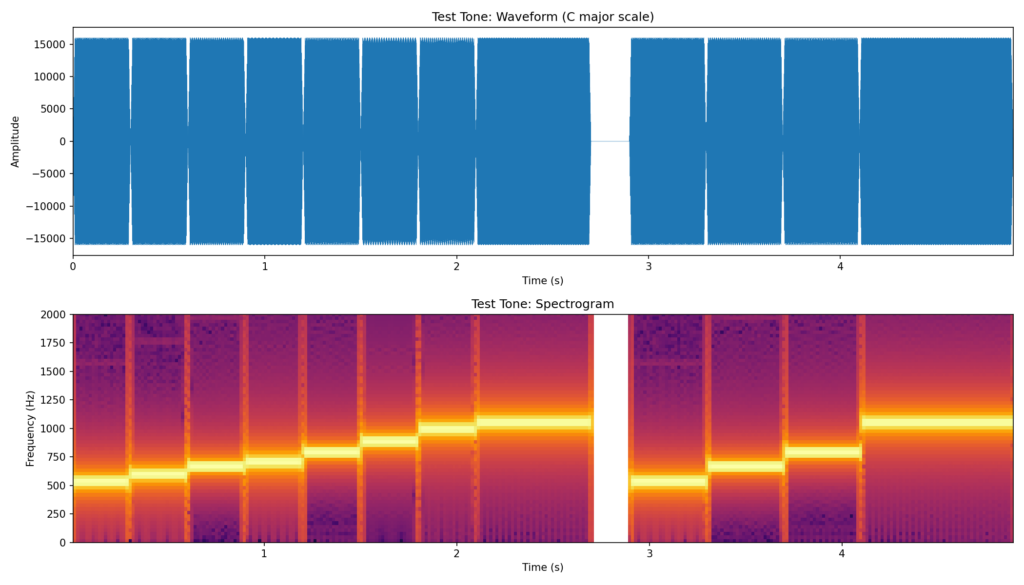

Source File

First, I generated a C major scale test tone—its staircase frequency pattern is easy to identify in spectrograms:

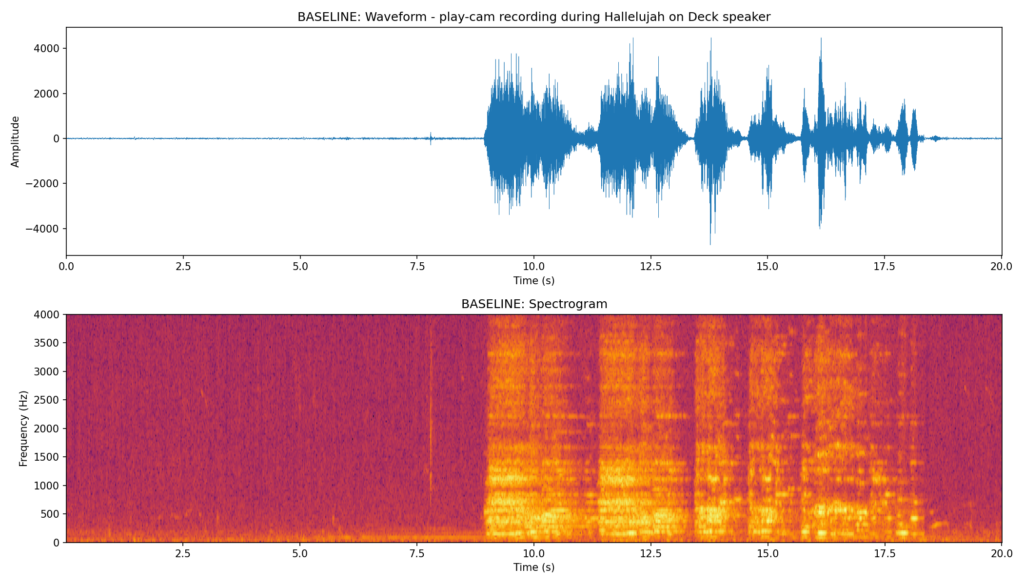

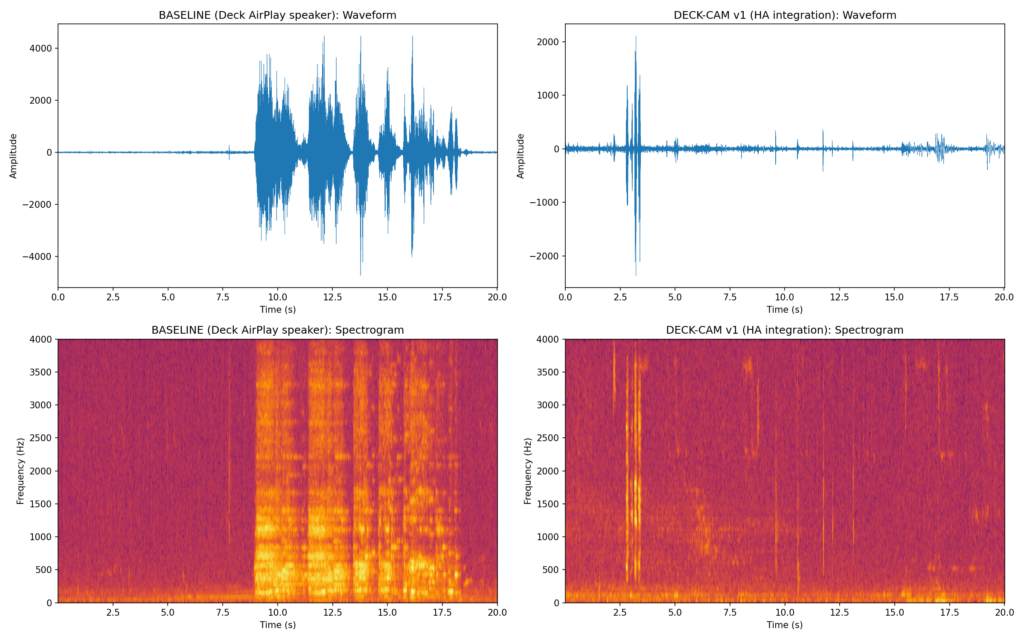

Baseline: AirPlay Speaker

For reference, I played the Hallelujah sound effect through a high-quality AirPlay speaker (“Deck”) and recorded it on a nearby camera:

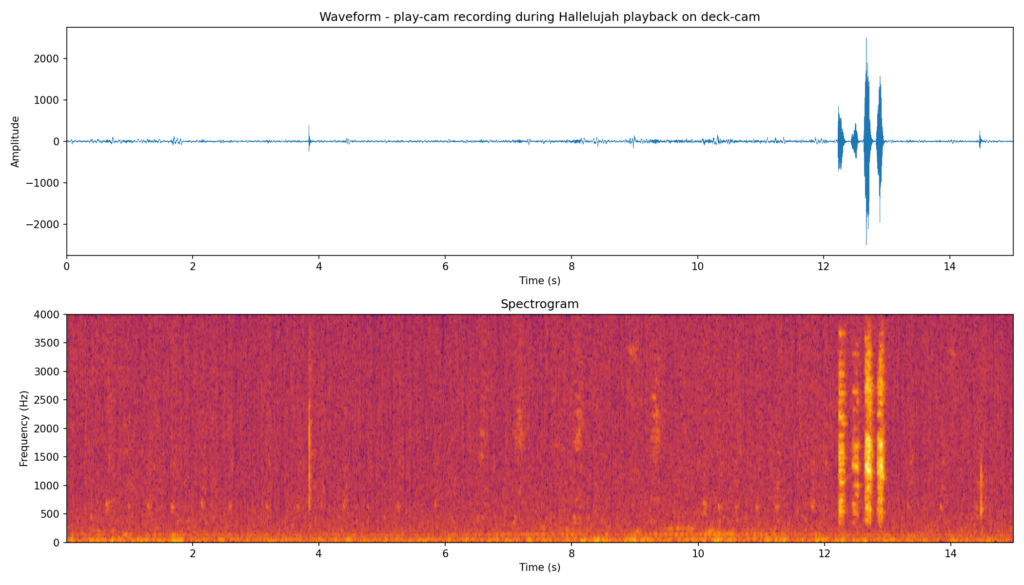

Attempt 1: Through the Camera (Broken)

Here’s what the camera speaker produced with the original code:

The entire clip was being dumped in a short burst. Clearly a pacing issue.

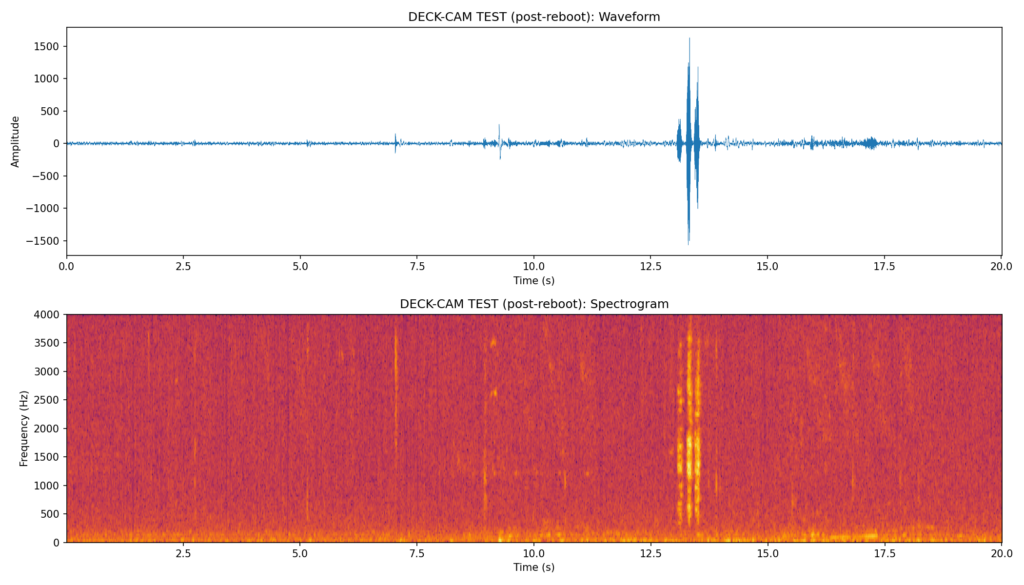

Attempt 2: After Reboot (Still Broken)

Finding the Root Cause

The integration converts audio to AAC (8 kHz mono, 1024 samples per frame) and sends it via RTSP backchannel. The frame pacing code calculated the interval as:

frame_interval = duration / len(frames)The problem: when audio is piped through ffmpeg (which is how the HA integration converts media files), ffmpeg doesn’t report a Duration: for piped input. So duration = 0, and frame_interval = 0. Every frame was sent instantly.

The Fix: Fixed Frame Interval

AAC at 8 kHz uses 1024 samples per frame. That’s a fixed interval:

frame_interval = 1024.0 / 8000.0 # 0.128 seconds per frameNo need to parse duration at all. Each AAC frame represents exactly 128ms of audio.

RTSP Backchannel Test (Fixed Pacing)

Testing with the test tone through the RTSP backchannel directly, with correct 128ms pacing:

The staircase pattern is clearly visible—each note is distinct and properly timed.

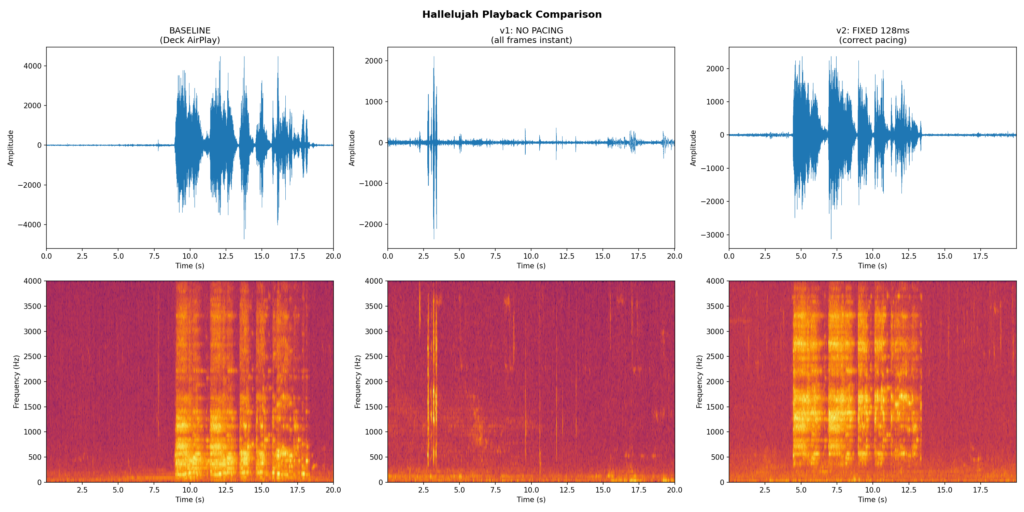

Side-by-Side Comparisons

Here’s the before and after with the actual Hallelujah sound effect:

The v2 fix (right panel) closely matches the baseline (left panel). The harmonic content is clearly visible and properly spread across the full duration of the clip.

The Integration Changes

I contributed these fixes back to the Dahua integration:

- Fixed RTSP backchannel frame pacing: Use the mathematically correct 128ms interval (1024 samples / 8000 Hz) instead of trying to derive it from ffmpeg’s duration output.

- Audio encoding detection: At startup, the integration checks if

AudioEnableis set on the camera’s encode config and logs a warning if not. - enable_audio service: A new Home Assistant service on media player entities that enables audio encoding on the camera without needing to use curl or the camera’s web UI.

- Lorex compatibility: Lorex cameras (Dahua OEM) don’t support the

audio.cgiHTTP endpoint. The integration detects this and falls back to RTSP backchannel automatically.

The Automation

With working speaker audio, the automation is straightforward. Each camera that can detect packages triggers the sound on its own speaker, throttled to once per hour per camera:

automation:

- alias: Package Arrived play sound

triggers:

- entity_id: sensor.front_entry_package_count

above: 0

trigger: numeric_state

id: front_entry

- entity_id: sensor.garage_l_package_count

above: 0

trigger: numeric_state

id: garage_left

# ... more cameras

actions:

- condition: template

value_template: >-

{{ now().timestamp() - last_played > 3600 }}

- action: media_player.play_media

target:

entity_id: "{{ speaker }}"

data:

media_content_id: media-source://media_source/local/Hallelujah-sound-effect.mp3

media_content_type: musicLessons Learned

- Spectrograms are invaluable for audio debugging. They immediately show whether the problem is pacing, encoding, distortion, or something else entirely.

- Record from a second camera to capture what the speaker actually outputs, rather than relying on subjective listening.

- Fixed-interval pacing is more robust than duration-based calculation for streaming protocols. The math is simple: samples_per_frame / sample_rate = interval.

- Check audio encoding first. On Dahua/Lorex cameras, the speaker won’t work unless

AudioEnable=truein the encode config. This setting persists across reboots. - Lorex quirks: Lorex cameras are Dahua OEM but have different firmware. They don’t support

audio.cgibut do support RTSP ONVIF backchannel. Some have flaky HTTP servers after soft reboots.

The complete code changes are in the Dahua integration fork, and the manual testing scripts (spectrogram generation, recording, analysis) are in the manual_tests/ directory.